Log in

Keep sensitive data secure during chatbot interactions with a privacy proxy

De-identify sensitive free-text data during chatbot interactions in real time to safeguard data from LLM consumption.

1000

+

Data engineering hours saved

35

+

Detected PII entity types

<0

min

Real-time redaction

Real-time sensitive data protection for AI

Prevent sensitive data leakage

Automatically detect and de-identify dozens of sensitive entity types in free-text data to keep private information out of your chatbot interactions.

.svg)

Protect user input

Safely leverage user inputs by detecting and removing Personally Identifiable Information (PII) or Protected Health Information (PHI) in real time.

Control data access

With reversible tokens, the chatbot can display the original text to users while ensuring the LLM processes only the redacted data.

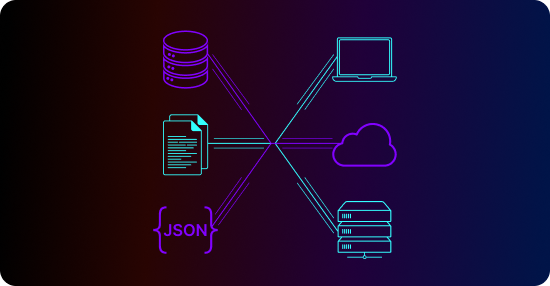

Block sensitive data from being processed by LLMs.

Tokenized data redaction

Replace sensitive data with reversible tokens to maintain consistency between chatbot prompts and the underlying RAG system for optimal RAG retrieval.

Multilingual Named Entity Recognition (NER)

Automatically identify dozens of sensitive entity types in free-text data with Textual’s proprietary, best-in-class multilingual machine learning models for NER.

The Tonic.ai product suite

Tonic Fabricate

AI-powered synthetic data from scratch and mock APIs

Tonic Structural

Modern test data management with high-fidelity data de-identification

Tonic Textual

Unstructured data redaction and synthesis for AI model training

Frequently asked questions

An LLM privacy proxy from Tonic.ai sits between users, applications, and large language models to prevent sensitive data from being exposed to LLMs. Tonic.ai thus enables safe LLM usage by transforming, filtering, or replacing sensitive inputs before they reach external or internal models.

Some LLMs can inadvertently process or retain sensitive information included in prompts. An LLM privacy proxy reduces the risk of data leakage, regulatory violations, and accidental exposure when teams use AI across engineering, support, and analytics workflows.

The proxy can safeguard structured records, free text inputs, and contextual metadata that may contain PII, financial details, or proprietary business information.

Rather than simply blocking data, Tonic.ai replaces sensitive values with realistic synthetic substitutes that maintain context and intent so LLM responses remain accurate and useful while sensitive details are safely removed.

AI platform teams, security leaders, and regulated enterprises use Tonic.ai to safely operationalize LLMs while maintaining strong governance and privacy controls.

.svg)

.svg)