Log in

Maximize model training by securely leveraging your sensitive data

De-identify your sensitive free-text data for use in model training and gain actionable insights to optimize your outcomes, without compromising privacy.

1000

+

Data engineering hours saved

35

+

Detected PII entity types

15

+

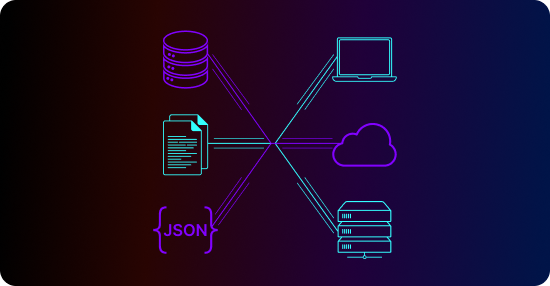

Supported sources and file formats

Unlock your data for LLM fine-tuning and general model development

Prevent sensitive data leakage

Automatically detect and de-identify dozens of sensitive entity types in free-text data to keep private information out of your models.

Preserve data realism

Substitute sensitive entities with realistic synthetic data to create a "hidden-in-plain-sight" solution that enhances both privacy and model quality.

Ensure HIPAA compliance

Partner with our expert determination provider to certify HIPAA-compliant data de-identification.

The all-in-one platform for unstructured data extraction and de-identification

Automated entity-based data synthesis

Replace sensitive data with indistinguishably realistic synthetic values to retain your data’s richness and preserve its statistical properties.

Unstructured data extraction and standardization

Extract data from messy, complex formats, such as PDFs of clinical notes, into a standard format convenient for model training. Support for TXT, DOCX, PDF, CSV, XLSX, TIFF, XML, PNG, JPEG, JSON, and more.

Multilingual Named Entity Recognition (NER)

Automatically identify dozens of sensitive entity types in free-text data with Textual’s proprietary, best-in-class multilingual machine learning models for NER.

The Tonic.ai product suite

Tonic Fabricate

AI-powered synthetic data from scratch and mock APIs

Tonic Structural

Modern test data management with high-fidelity data de-identification

Tonic Textual

Unstructured data redaction and synthesis for AI model training

Frequently asked questions

Tonic.ai enables teams to train, test, and validate machine learning models using privacy-safe data that reflects real world patterns without exposing sensitive information.

Production datasets often contain regulated or proprietary information that cannot be freely shared with data science teams or external partners. This creates delays, limits experimentation, and increases compliance risk during model development.

Yes. Teams can use Tonic.ai to generate consistent or varied datasets on demand, making it easier to compare model performance, run experiments, and iterate without reintroducing privacy risk.

By preserving statistical properties and real world data behavior, Tonic.ai allows models to learn from representative data scenarios rather than oversimplified or overly sanitized datasets.

Using synthetic and de-identified data helps organizations reduce exposure to sensitive information while supporting internal governance, audit requirements, and responsible AI practices.

.svg)

.svg)